Scope

In today post we will talk about Azure Audit Logs and retention

policies. Because retention policies might differ from one industry to another,

different approaches are required.

Audit Logs

From my past experience, I know that each company and

department might understand a different thing when you say Audit Logs. I was

involved in projects where when you tag a log as audit you would be required by

law to keep the audit log for 20-25 years.

In this context, I think that the first step for us is to

define what is an Audit Log in Azure. In Azure, most of the audit logs can be

an activity log or a deployment operation.

The first one is close related to any write operation that happened

on your Azure Resource (post, put, delete). Read operations are not considered activity

logs – but don’t be disappointed, there are many Azure Services that provided

monitoring mechanism for read operation also (for example Azure Storage).

The second type of audits are the one generated during a

deployment operation. Actions like creating, deleting or modifying of your

Azure Resources are fully audited.

Retention policy

The default retention policy is 90 days. During this period,

we can use the Activity Logs feature from the Monitor section of the portal to

look on what happened on our subscriptions in the last 90 days. After this period,

the audit information is automatically deleted.

There is no

configuration that would allow us to increase or decrease this period.

Problem

Of course, this data can be exported using the available

API. The biggest problem is not how you can export audit information, but where...

There are so many different types of storages and services

that can be used for this purpose that it is hard to decide what you want to

use. Do you want to push them in Azure Tables, Azure Blobs, Azure Data Lake,

Azure SQL, Azure DocumentDB, and many more…

Where to store it?

There is no perfect location. There are two important

questions that you need to find response before thinking about a solution.

For how long I need to keep the audit logs? & How often I need to access them?

The response of these two questions will influence your

approach. I tried to identify 4 main scenarios,

that are pretty common and can help us to make an idea on what is the best approach.

- Scenario 1: Store them for 90 days, with good query capability

- Scenario 2: Store them for 2 years, with a medium level of query capability

- Scenario 3: Store them for 5 years, with good query capability at the beginning, but after 2 years query capability is not so important anymore

- Scenario 4: Store them for 20 years as cheap as possible, without caring about query capabilities (low level of query)

Scenario 1: Store them

for 90 days, with good query capability

This is a no brain situation. By default, the audit is stored

for 90 days inside Azure. Beside this the Activity Logs resource blade from

Monitor allows us to execute complex queries over this information.

Scenario 2: Store them for 2 years, with a medium level of

query capability

Starting from this scenario own, there are multiple

solutions for the same case. There is no wrong solution, because requirements

can be different and force the architecture team to find a solution as close as

possible to the business requirements.

For situations like this, when you need to store audit data

for 2 years and you need query capability I would look at OMS (Operations

Management Suite). This service offer monitor capability for cloud and

on-premises environments.

There are two powerful features of OMS that transform this

service to a good candidate for this use case.

First of all, it allows us to specify different data sources

from where data (audit data) can be fetch. As data source, we can specify as

data source the log analytics collections from Azure Diagnostics and Azure

Monitor. The audit data are stored in the OMS in his own OMS Repository for 2 years.

Secondly, for 2 years the data is searchable inside the OMS

using the UI support from OMS. This means that we have query capabilities

without having to implement anything.

This scenario can be supported easily with only some configuration

actions that needs to be done for OMS data source.

Scenario 3: Store them for 5 years, with good query

capability at the beginning, but after 2 years query capability is not so important

anymore

My assumption is that even if after 2 years, query

capabilities are not so important anymore, there are still some query requirements.

The proposed solution is based on the previews solution,

from Scenario 2, where we used OMS to store and offer query capabilities for

the first 2 years. The difference is that in parallel with OMS all audit logs

would be pushed to Azure Data Lake, where a 5 years retention policy would be

specified. Azure Data Lake allows us to specify for how long we want to store

data, a very useful feature especially for situations like this.

On top of Azure Data Lake an HDInsight or any other big data

processing system would be configured to allow us to execute queries on top of

our data. The query will not run in real-time but it should offer good query

capabilities and also keeping the running cost low.

It is important to specify that the audit data would be

moved in both storages (OMS and Azure Data Lake) from the begging. I prefer

this approach even if I have duplicated data to have a simpler reporting and

query mechanism. Otherwise I would need to query both storages and consolidate

the results. This could easily generate misleading information.

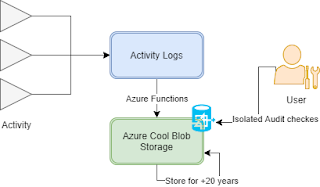

Scenario 4: Store them for 20 years as cheap as possible,

without caring about query capabilities (low level of query)

This situation is common for companies that are enforced by

law to keep audit data for a specific time period. They don’t care about how easily

data can be retried and cases when data needs to be accessed is isolated.

For these situations, the focus is to find a storage that is

extremely cheap and has a good redundancy policy. Azure Cool Blob Storage is a

good candidate. Extremely cheap, has a good redundancy policy and when needed

we can access it easily like any other blob storage.

For this scenario, as for the previous one where we used

Azure Data lake we will need a mechanism to move the audit data to our

storages. This can be done using an Azure Function that is triggered as

specific time interval and moves the new content produced in the last x hours

to the storage.

We need to be careful how we store data in Cool Blob Storage.

Data needs to be stored in a such a way that would allow us to access it later

on in time with a minimal effort. Grouping data based on time, regions,

department can be useful.

On top of Azure Cool Blob Storage,

we can use with success a system like HDInsight to crunch data when needed.

In this moment in time there is

no support for auto-deletion, similar to the one offered in Azure Data Lake. We

will need to define a mechanism that runs every month or year and remove data

that is older than 20 years. The good news is that these feature is already

planned and I expect to see it pretty soon available (https://feedback.azure.com/forums/217298-storage/suggestions/2474308-provide-time-to-live-feature-for-blobs)

Conclusion

The above solutions are just some approaches for these 4 scenarios.

There are multiple ways to resolve this problem. I consider that the most

important thing that we need to take into account is that there are multiple

Azure Services that can help us to resolve our problems. We need to keep our

radar on and select the ones that suites our needs.

Audit logs are becoming very important these days. Really good article, very well articulated.

ReplyDelete