There were a set of updates related to Azure Redis Cache last days.

If you didn't had the opportunity to use Redis Cache until now, you should know that it is a in-memory data structure stores that is used very often not only as a database or a cache, but also as a message broker. It is fully with features that can make your life easier.

Microsoft is offering this feature as a out of the box service, that you can use it from the 1st moment, no configuration or installations needed.

Some new features are now appearing in Public Preview, that will make Azure Redis Cache more appealing to anybody.

Azure Redis Cache Clustering

Until now we didn't had to many options on Azure Redis Cache if we wanted more power from it or if we would reach the size limit of an instance.

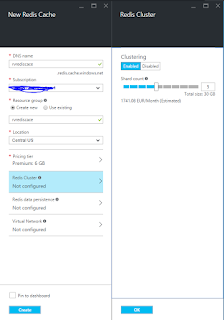

Now, in the moment when we create a new instance of Azure Redis Cache we have the ability to activate this feature and specify the number of instances that we need. Don't forget that each instance (shard) from the cluster is in a primary&replica pair. The replicas are managed by Azure Redis Cache Service our of the box.

Don't forget that this feature can be activated only for new instances of Azure Redis Cache.

In this moment the maximum size of a shard is 53GB, combined with the size cluster that can reach 10. This means that you can have a Azure Redis Cache Cluster that has 530GB ... WOW ...

Azure Redis Cache Data Persistence

How nice would be to be able to create backups and persistent your data from Redis Cache and to be able to access/restore it later on.

Even if it is a cache, you might need to be able to persist the data in case of a disaster. The persistence is done on Azure Storage and can be later used.

Azure Redis Cache LOVES Azure Virtual Network

Starting from now we can add our instances of Azure Redis Cache to Azure Virtual Networks (VNet). In this way we can isolate our instances of Azure Redis Cache from the public word.

By having the cache in VNet would guaranty you that nobody from outside the VNet can access it, if it doesn't has access to VNet.

This can be translated as an additional security layer at network level.

Conclusion

The new features that were just announced now for Azure Redis Cache makes this service very powerful and can be used with success for complex scenarios where the size of cache can be very big and requires to be isolated from public internet.

If you didn't had the opportunity to use Redis Cache until now, you should know that it is a in-memory data structure stores that is used very often not only as a database or a cache, but also as a message broker. It is fully with features that can make your life easier.

Microsoft is offering this feature as a out of the box service, that you can use it from the 1st moment, no configuration or installations needed.

Some new features are now appearing in Public Preview, that will make Azure Redis Cache more appealing to anybody.

Azure Redis Cache Clustering

Until now we didn't had to many options on Azure Redis Cache if we wanted more power from it or if we would reach the size limit of an instance.

Now, in the moment when we create a new instance of Azure Redis Cache we have the ability to activate this feature and specify the number of instances that we need. Don't forget that each instance (shard) from the cluster is in a primary&replica pair. The replicas are managed by Azure Redis Cache Service our of the box.

In this moment the maximum size of a shard is 53GB, combined with the size cluster that can reach 10. This means that you can have a Azure Redis Cache Cluster that has 530GB ... WOW ...

Azure Redis Cache Data Persistence

How nice would be to be able to create backups and persistent your data from Redis Cache and to be able to access/restore it later on.

Even if it is a cache, you might need to be able to persist the data in case of a disaster. The persistence is done on Azure Storage and can be later used.

Azure Redis Cache LOVES Azure Virtual Network

Starting from now we can add our instances of Azure Redis Cache to Azure Virtual Networks (VNet). In this way we can isolate our instances of Azure Redis Cache from the public word.

By having the cache in VNet would guaranty you that nobody from outside the VNet can access it, if it doesn't has access to VNet.

This can be translated as an additional security layer at network level.

Conclusion

The new features that were just announced now for Azure Redis Cache makes this service very powerful and can be used with success for complex scenarios where the size of cache can be very big and requires to be isolated from public internet.

Comments

Post a Comment