[IoT Home Project] Part 5 - Send data to Azure IoT Hub, control time interval and refac the configuration information

In this post we will discover how we can:

Read sensor data at a specific time interval

Extract all configuration data in a separate file

Once we have all this configuration, we will need to access it. For this we can load the JSON in our application and access the properties of the configuration file.

In each module we don't need to specify all the configuration file. We can only specify the section that is specific to that module ('Config.deviceCommunicationConfig'). For this you can take a look in the source file.

Conclusion

Mission complete for now. See push all sensor data to Azure IoT Hub and we have the flexibility to change configuration more easily. Additional to this we have control on how often data is pushed to Azure IoT Hub.

Next Step

In the next post we will start to store the data that is pushed to Azure IoT Hub to blobs. On top of this we will take a look on how we can calculate the average values for read data.

Next post: [IoT Home Project] Part 6 - Stream Analytics and Power BI

- Send all device sensor data that are read from GrovePI to Azure IoT Hub

- Read sensor data at a specific time interval

- Extract all configuration data in a separate file

GitHub source code: https://github.com/vunvulear/IoTHomeProject/tree/master/nodejs-grovepi-azureiot

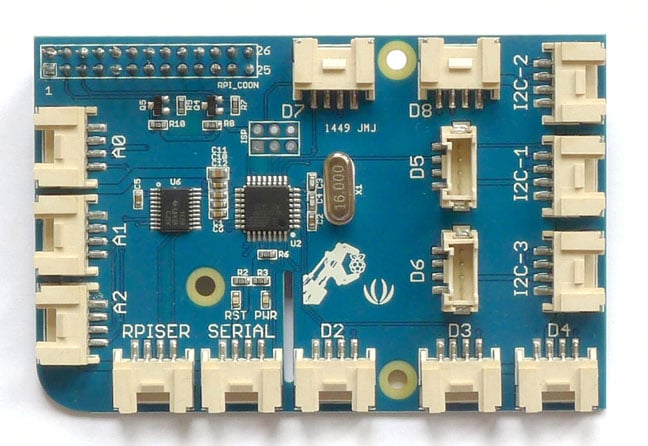

Send all device sensor data that are read from GrovePI to Azure IoT Hub

This is a simple task. The function that reads sensor data from GrovePI already returns all the sensor data. The only thing that we need to do is to put this information in the message that is send to Azure IoT Hub.

In the future it is pretty clear that we will have different type of messages that we send over IoT Hub. Because of this we shall add a property to the message that is send to IoT Hub that specifies the message type - in our case we'll call the message that contains sensor data 'sensorData'.

var dataToSend = JSON.stringify({

deviceId: "vunvulearaspberry",

msgType: "sensorData",

sensorInf: {

temp: sensorsData.temp,

humidity: sensorsData.humidity,

distance: sensorsData.distance,

light: sensorsData.light

}

});

deviceCommunication.sendMessage(dataToSend);

Read sensor data at a specific time interval

In this moment we have a 'while(true)' that reads sensor information and send data to IoT Hub. This works great, but what if we want to control how often data is read.

To be able to do this, we can use 'setInterval' function from node.js. This function allows us to specify a time interval when a function is called. The time interval is specified in milliseconds.

The nice thing is that the functions will not be executed in parallel if the first call didn't finished yet. This is important when we specify a small time interval like 100 ms and the read sensor information and send data operations takes more than 100 ms.

function collectSensorData(grovePiSensors, deviceCommunication) {

var timeIntervalInMilisec = 5000; // 5s

setInterval((grovePiSensors, deviceCommunication) => {

var sensorsData = grovePiSensors.getAllSensorsData();

var dataToSend = JSON.stringify({

deviceId: "vunvulearaspberry",

msgType: "sensorData",

sensorInf: {

temp: sensorsData.temp,

humidity: sensorsData.humidity,

distance: sensorsData.distance,

light: sensorsData.light

}

});

deviceCommunication.sendMessage(dataToSend);

}, timeIntervalInMilisec, grovePiSensors, deviceCommunication);

}

Extract all configuration data in a separate file

In this moment we have configuration data in multiple modules. If we want to change something, we need to search where the configuration is stored, some information like device id string is duplicated.

The code is not so nice and a change can be buggy and time consuming.

To avoid all this problems, we ca create a config.json file in our application where all configuration data is added. For each module I prefer to create a section where I group specific configuration for that that module ('deviceCommunicationConfig' and 'grovePiConfig').

{

"debug" : true,

"sensorDataTimeSampleInSec" : 5,

"deviceCommunicationConfig":

{

"deviceId" : "vunvulearaspberry",

"azureIoTHubMasterConnectionString" : "HostName=vunvulear-iot-hub.azure-devices.net;SharedAccessKeyName=iothubowner;SharedAccessKey=+whKMyd08PLDNoaR+yEmToJcHL6wsFo36tAyDBU8Qr0=",

"azureIoTHubHostName" : "vunvulear-iot-hub.azure-devices.net"

},

"grovePiConfig":

{

"dhtDigitalSensorPin" : 2,

"ultrasonicDigitalSensorPin" : 4,

"lightAnalogSensorPin" : 2,

"soundAnalogSensorPin" : 0

}

}

Once we have all this configuration, we will need to access it. For this we can load the JSON in our application and access the properties of the configuration file.

var Config = require('./config.json');

...

var grovePiSensors = new GrovePiSensors(Config.grovePiConfig);

...

this.debug = debug;

this.registry = AzureIoTHub.Registry.fromConnectionString(Config.deviceCommunicationConfig.azureIoTHubMasterConnectionString);

this.deviceId = Config.deviceCommunicationConfig.deviceId;

this.azureIoTHubHostName = Config.deviceCommunicationConfig.azureIoTHubHostName;

In each module we don't need to specify all the configuration file. We can only specify the section that is specific to that module ('Config.deviceCommunicationConfig'). For this you can take a look in the source file.

Conclusion

Mission complete for now. See push all sensor data to Azure IoT Hub and we have the flexibility to change configuration more easily. Additional to this we have control on how often data is pushed to Azure IoT Hub.

Next Step

In the next post we will start to store the data that is pushed to Azure IoT Hub to blobs. On top of this we will take a look on how we can calculate the average values for read data.

Next post: [IoT Home Project] Part 6 - Stream Analytics and Power BI

Comments

Post a Comment