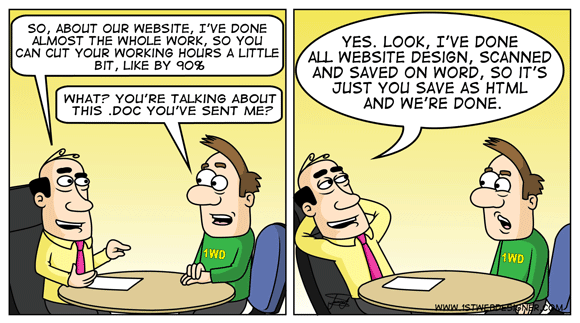

Every day we can see around as a constant fight between clients and technical team. Clients tries to push one feature after another feature without giving time to the teams to develop them consistent, maintaining the core in a good shape and adding the functionality to the core of the solution to support this feature.

This is a specific case for clients that has products – software products. From the client perspective, it is something normal to do, especially when we think that he make a profit with every new feature. Even though the technical team explain the risks and tries to push back the features for one release cycle of the product because the products cannot support well a specific feature, the client don’t care and request the implementation of it.

In such situations, we can see odd solutions that brake base rules of programming. Also, after releasing the feature in production we need to fights with bugs that are extremely dangerous and can put all the system down in a few minutes and hours.

In this moment I have three situation in mind, that are different from the technical perspective, but all of them have the same root cause.

Story 1

Client A wanted to be able to control the system from an external component/devices (from a plugin for example). Imagine that we have a system that manage all the electronic devices that exist in a company. By design and because of the security concerns, each electronic device can share data only with the core of the system. Not only this, the device can be controlled only by the core of the system. For example a device cannot turn off/on another device.

One day, a new device appear that need to control the rest of the devices, which until now were manage by the core system. The best approach would be to rethink the system in a way that can support this feature. But because of the time limit and strategically positioning of that feature a dirty fix was required.

The solution that ended in production was based on reflection. Because a part of the external device plugin run also in the same process as the core system, reflection was used to access the core functionality that gave the ability to control other plugins.

Story 2

Client B request to see custom logs of a system for the last 3 days. You design a solution that works perfectly, store the last 3 day information in the database and so on.

5 days before the releasing, the client tell you that he wants to display and manage the logs for the last 30 days. From implementation perspective, you only need to change a constant from 3 to 30. The client also knows that this value can be very easily changed and push this change very aggressive.

In the end what could happen? After you change this value, run all the necessary tests you release this into production and… after few days all the system goes down. You investigate the root cause of the problem and you realize that the cause of it was changing that small value from 3 to 30 (all this time the system is down).

Why something like this happen. All the devices around the system started to send the logs to the main server that stored and process the information that is newer than 30 days. But storing and processing this amount of information means that the database will need to deal with 10 times more data.

In this case, the database server strangled the hard disks of the main server and putted all the system down.

Story 3

Client C has an idea about a new feature. After discussing with technical team they decide to design and implement a prototype.

During the implementation of it, the team realize that they need to implement a custom protocol. Because we are talking about a prototype, they decide to integrate a 3rd party library. The library has a lot of bugs, but is a compromise that is accepted by the client also in order to finalize the prototype and see if the feature has a potential on the marker.

The technical team transmit to the client that this is only a prototype and when it will be integrated in production, they will need X to Y days to implement the protocol in a robust way.

The prototype is a success, the client decide to include this feature in the next release, but he ignore that the 3rd party library used for that given protocol is not ready for production. He is not ready to invest that specific amount of time for it.

We saw in this 3 simple examples how hard is to convince clients that releasing a feature or a product to fast can be harmful. It is extremely hard, if not impossible to explain to a client that ne needs to wait 3 months (and lose a possible new contract or partnership) until the product will be ready to support a specific feature.

All the time, clients will make this push, they will push features in the most aggressive way. They will tell you that they know the risk, they accepted and they are totally responsible for it. But in the end, if a disaster happen, the technical team will need to dig into the problem too see why the solution is not working and they will be blamed – “You wrote the application, not me” (client say). In the end of the day, from the client perspective, the technical team is the one that is responsible for it.

Handling such requests is pretty hard. The hardest think is to say NO and explain to the client way this is not possible. In the end, even if you explain in the best way to the client the risk and try to convince him that this is not possible now and he need to wait 3 more months we will say – “I assume the risk, go for it”.

This is a specific case for clients that has products – software products. From the client perspective, it is something normal to do, especially when we think that he make a profit with every new feature. Even though the technical team explain the risks and tries to push back the features for one release cycle of the product because the products cannot support well a specific feature, the client don’t care and request the implementation of it.

In such situations, we can see odd solutions that brake base rules of programming. Also, after releasing the feature in production we need to fights with bugs that are extremely dangerous and can put all the system down in a few minutes and hours.

In this moment I have three situation in mind, that are different from the technical perspective, but all of them have the same root cause.

Story 1

Client A wanted to be able to control the system from an external component/devices (from a plugin for example). Imagine that we have a system that manage all the electronic devices that exist in a company. By design and because of the security concerns, each electronic device can share data only with the core of the system. Not only this, the device can be controlled only by the core of the system. For example a device cannot turn off/on another device.

One day, a new device appear that need to control the rest of the devices, which until now were manage by the core system. The best approach would be to rethink the system in a way that can support this feature. But because of the time limit and strategically positioning of that feature a dirty fix was required.

The solution that ended in production was based on reflection. Because a part of the external device plugin run also in the same process as the core system, reflection was used to access the core functionality that gave the ability to control other plugins.

Story 2

Client B request to see custom logs of a system for the last 3 days. You design a solution that works perfectly, store the last 3 day information in the database and so on.

5 days before the releasing, the client tell you that he wants to display and manage the logs for the last 30 days. From implementation perspective, you only need to change a constant from 3 to 30. The client also knows that this value can be very easily changed and push this change very aggressive.

In the end what could happen? After you change this value, run all the necessary tests you release this into production and… after few days all the system goes down. You investigate the root cause of the problem and you realize that the cause of it was changing that small value from 3 to 30 (all this time the system is down).

Why something like this happen. All the devices around the system started to send the logs to the main server that stored and process the information that is newer than 30 days. But storing and processing this amount of information means that the database will need to deal with 10 times more data.

In this case, the database server strangled the hard disks of the main server and putted all the system down.

Story 3

Client C has an idea about a new feature. After discussing with technical team they decide to design and implement a prototype.

During the implementation of it, the team realize that they need to implement a custom protocol. Because we are talking about a prototype, they decide to integrate a 3rd party library. The library has a lot of bugs, but is a compromise that is accepted by the client also in order to finalize the prototype and see if the feature has a potential on the marker.

The technical team transmit to the client that this is only a prototype and when it will be integrated in production, they will need X to Y days to implement the protocol in a robust way.

The prototype is a success, the client decide to include this feature in the next release, but he ignore that the 3rd party library used for that given protocol is not ready for production. He is not ready to invest that specific amount of time for it.

We saw in this 3 simple examples how hard is to convince clients that releasing a feature or a product to fast can be harmful. It is extremely hard, if not impossible to explain to a client that ne needs to wait 3 months (and lose a possible new contract or partnership) until the product will be ready to support a specific feature.

All the time, clients will make this push, they will push features in the most aggressive way. They will tell you that they know the risk, they accepted and they are totally responsible for it. But in the end, if a disaster happen, the technical team will need to dig into the problem too see why the solution is not working and they will be blamed – “You wrote the application, not me” (client say). In the end of the day, from the client perspective, the technical team is the one that is responsible for it.

Handling such requests is pretty hard. The hardest think is to say NO and explain to the client way this is not possible. In the end, even if you explain in the best way to the client the risk and try to convince him that this is not possible now and he need to wait 3 more months we will say – “I assume the risk, go for it”.

“I assume the risk, go for it”... That's easy to say :)

ReplyDeleteI like to put it in terms of cost: "We can do it quick and dirty, and it will cost you 10K now and 10K each month from now on until we make it clean. Or we can do it the right way and will cost you 30K".